Background

In Optimizing Optimizely Part 1 we uncovered how the implementation of the snippet impacts on customer experience and discussed some best practices covering how to implement the Optimizely snippet. In this article we take a look at how to optimize further to gain improved web performance for client-side in-browser implementations.

Sizing the Optimizely snippet

Part 1 covered how long the snippet can take to download and be processed by the browser. A key tenant of web performance is ‘only download what the web page needs’ as this enables both the network bandwidth and browser CPU to be optimized.

Optimizely experiments are determined from the execution of the JavaScript snippet, which are generally developed by the Marketing or Digital team responsible for Optimizely. This manual process covers all aspects of an experiment’s life-cycle therefore by focussing on the way experiments are developed and managed it should be possible to optimize the snippet size and keep it as small as possible.

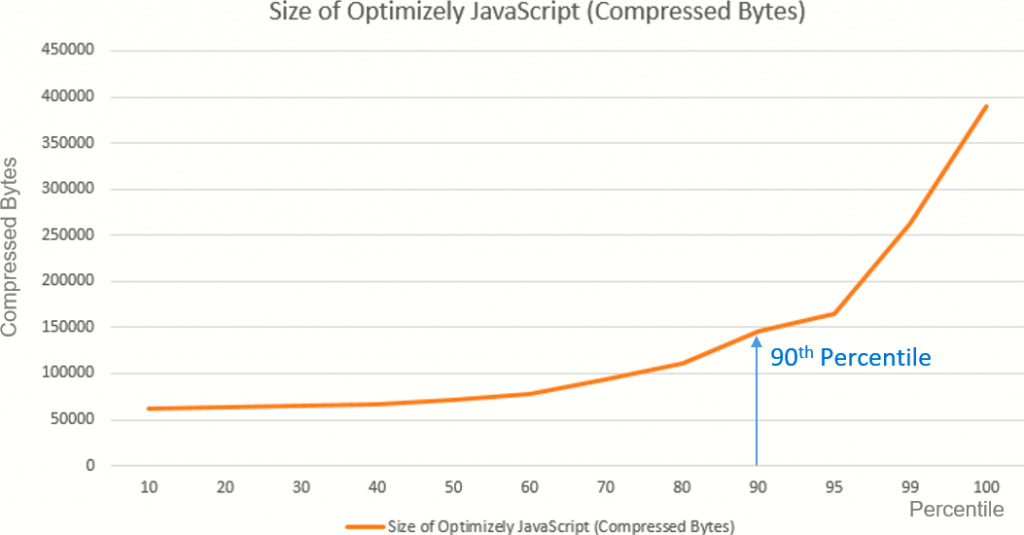

From HTTP Archive figure 1 shows a wide range of snippet sizes being deployed from 60 KB to almost 400 KB. As compressed values these are large downloads in their own right but they must be uncompressed and processed by the client CPU and even a 147 KB gzipped download (90th Percentile) converts to 688 KB of code to be parsed and executed.

As Optimizely X does not have the prerequisite of jQuery it does not need to be loaded either before or as part of Optimizely. At a minimum, this can save over 30 KB of gzipped and minified jQuery code that must be loaded and executed.

However, as a popular JavaScript development library, and a necessary component of Optimizely Classic, jQuery has been used extensively in developing experiments. Using jQuery is a difficult habit to break as it provides many easy to use features, has cross-browser functionality and is universally known and supported by the JavaScript community. However, using native JavaScript in the generation of Optimizely experiments does not rely on prerequisite software and can be far more efficient to load and execute. Consequently, from a web performance and customer experience perspective freeing Optimizely experiments from jQuery can be beneficial as Optimizely loads and processes faster.

React Has Overheads Too!

The removal of jQuery offers improved web performance for Optimizely users but this gain is being negated by the adoption of React.js, or similar, in Optimizely snippet development.

There is no doubt that React can deliver a productivity gain for development engineers as they can develop and test in Optimizely prior to consolidating, with minimal code change, new features into the codebase.

However, from a web performance perspective, this approach has the same negative impact as jQuery.

Figure 2 shows how React loads synchronously before Optimizely can process. The long periods of inactivity on the ‘Main’ thread show how the two React JavaScript libraries not only delay the loading and execution of Optimizely but also prevents the critical render path, such as HTML parsing, from progressing.

These delays can be similar to those observed with jQuery (as discussed in Part 1). Unfortunately, legacy experiments may still require jQuery to be present adding to web performance delays until jQuery is completely removed.

Secondary Page Loading

The benefits of caching data are well known but when running experiments, caching snippets for too long may result in the experiment becoming stale and out of date. Optimizely’s time-to-live (TTL) feature provides a dynamic caching facility to enable this problem to be controlled. The time setting ensures that the validity of the snippet is checked after the TTL value. Consequently, the website visitor will be able to benefit from a cached version of the snippet that can be reused as they move from page to page on the site.

The default value for the TTL is 2 minutes which may result in repeated downloads of the snippet if the session is longer than this and extends across several pages.

An alternative to this is to extend the TTL to a value just longer than your average session length. Options to do this are limited as TTL can be set at 5 minute intervals so most websites will benefit from the setting of 5 or 10 minutes, unless the snippet changes more frequently than every 5 or 10 minutes. However, beware not to make this setting too long otherwise as the cache may not expire for some time with website visitors unable to benefit from any updates that you implement.

Wrapping it all up

In these two articles we have reviewed many aspects of optimization for Optimizely that can be investigated on your website that can help towards improving web performance. The key recommendations are summarized:

- Implement the JavaScript snippet in the Head section as a high priority resource.

- Ensure priority CSS resources are referenced in the HTML ahead of the Optimizely Snippet.

- Do not use a tag manager for implementing the snippet.

- Review and remove unused and duplicate code from the JavaScript snippet.

- Code using native JavaScript and remove all jQuery, or any other framework library, code from the snippet.

- Ensure that jQuery, or any JavaScript library, does not load ahead of the Optimizely snippet.

- Remove redundant A/A tests once all the supporting development work has been completed.

- Review the TTL value for caching of the snippet to reflect the length, in minutes, of the majority of visitor sessions.